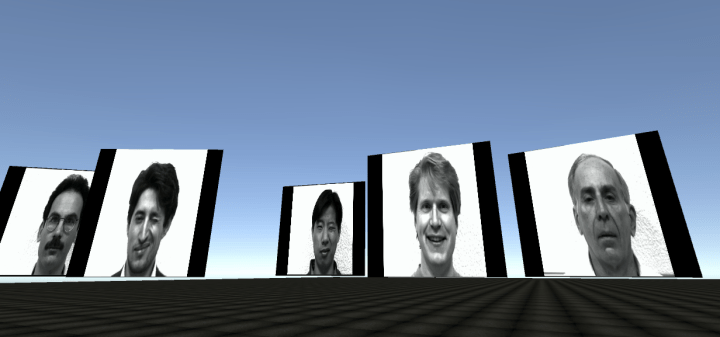

For my Assignment1, I drew the Yale Face Database, a set of facial photos used for computer facial recognition.

These are interesting in the sense that the way we are able to view the images is so different from the way the computer views them when parsing through them for recognition. How can we best simulate the computer’s vision to see how the computer sees?

I decided a simple way to present this would be to place the faces on planes inside the 3D world, and scroll through the different expressions to basically create animated faces from the photos. This has a funny effect, but I think, in a very basic way, simulates the way a computer would parse through the expressions and join them together to create an overall perspective of the person’s face.

It was cool to be able to walk around the 3D environment amongst the faces to imply the non-linear way that a computer thinks.

Categories: altdocs, Uncategorized